Running Jobs: Difference between revisions

| Line 11: | Line 11: | ||

=== Condo Tier and Arrow === | === Condo Tier and Arrow === | ||

At it was stated above all jobs must start from '''<font face="courier">/scratch/<font color="red"><userid></font></font>''' directory and the valuable data must be kept in '''/global/u/<font face="courier"><font color="red"><userid></font></font>'''. | At it was stated above all jobs must start from '''<font face="courier">/scratch/<font color="red"><userid></font></font>''' directory and the valuable data must be kept in '''/global/u/<font face="courier"><font color="red"><userid></font></font>'''. For Arrow and condo servers the '''/scratch''' and '''/global/u''' reside on the same HPFS file system over 200 Gbps Infiniband interconnect. The system software takes cares of optimal placement of the files. Note, that '''/global/u is not backed on tape at that time due to lack of funds.''' | ||

=== Copy/move files from/to server === | === Copy/move files from/to server === | ||

Revision as of 04:36, 10 October 2023

Overview

The HPCC resources are grouped in 3 tiers: free tier (FT), advanced tier (AT), condo tier (CT) and separate server Arrow. In all cases and despite of used server all jobs at HPCC must:

- Start from user's directory on scratch file system - /scratch/<userid> . Jobs cannot be started from users home directories - /global/u/<userid>

- Use SLURM job submission system (job scheduler) . All jobs submission scripts written for other job scheduler(s) (i.e. PBS pro) must be converted to SLURM syntax. All users' data must be kept in user home directory /global/u/<userid> . Data on /scratch can be purged at any time nor are protected by tape backup.

All users' data must be kept in user home directory /global/u/<userid> . Data on /scratch can be purged at any time and are NOT protected by tape backup. Arrow and CT servers mount independent file system HPFS and thus data cannot be shared directly between servers in AT and FT and Arrow of CT servers. Users must explicitly move files.

Advanced and Free Tier

Servers in FT and AT are Blue Moon, Penzias, CRYO and Appel. They are attached to separate /scratch and /global/u (previously known as DSMS). via 40Gbps Infiniband Interconnect. The former is a separate small disk based parallel file system NFS mounted on all nodes (compute and login) and the latter is large, slower file system (holding all users' home directories /global/u/<userid>) mounted only on servers' login nodes via 40Gbps Infiniband Interconnect. Both file systems have moderate bandwidth of several hundred MB per second. Every home directory for free and advanced tier servers has a quote of 50GB. The latter can be expanded by submitting argumented request to HPCC. bal/u file s file system is backup-ed with retention time of backup 30 days. Because the scratch filesystem is mounted on all compute nodes all jobs on any server must start /scratch/<userid> directory. Jobs cannot be started from user's home - /global/u/<userid> . Users must preserve valuable files (data, executables, parameters etc) in /global/u/<userid>. Both file systems have moderate bandwidth of several hundred MB per second. Every home directory has a quote of 50GB. The latter can be expanded by submitting request to HPCC stating the reasons for required expansion. Note, that global/u file s file system is backup-ed with retention time of backup 30 days.

Condo Tier and Arrow

At it was stated above all jobs must start from /scratch/<userid> directory and the valuable data must be kept in /global/u/<userid>. For Arrow and condo servers the /scratch and /global/u reside on the same HPFS file system over 200 Gbps Infiniband interconnect. The system software takes cares of optimal placement of the files. Note, that /global/u is not backed on tape at that time due to lack of funds.

Copy/move files from/to server

This section is an overview. For details please refer to a section "File Transfers".

From/to server in free and advanced tier

- by using cea data transfer node

- by tunneling data (without copy) via gateway (chizen)

- use Globus online

Coping data from/to user computer to/from chizen is discouraged. Chizen has small memory and thus cannot handle large fails.

From/to Arrow and any of condo servers

Only tunneling (not copy) via gateway is supported. Note that Globus and cea are not accessible for CT servers and Arrow.

Running jobs on server form advanced and free tier

Partitions

The main partition which distributes jobs on other partitions is production. Users must use this partition for all job submission. The partition has time limit of 120 hours (currently). Note that time limit as well as number of jobs per group and per user are reviewed periodically and may change in order to maximize utilization f the resources. In addition the MHN supports partdev partition which has limit of 2 hours and is dedicated to development of the codes.

Copy/move files from/to FT and AT servers

Before submitting any job to FT/AT servers the users must prepare/move/copy data into their /scratch/<userid> directory. Users can transfer data to/from /scratch/<userid> by using the file transfer node cea or by using GlobusOnline. HPCC recommends a transfer to user's home directory first ( /global/u/<userid> ) before copy the needed files from user's home directory to /scratch/<userid>. Note that both cea and Globus online allows the transfer of user's files directly to /global/u/<userid>. The input data, job scripts and parameter(s) files can be locally generated with use of Unix/Linux text editor such as Vi/Vim, Edit, Pico or Nano. MS Windows Word is a word processing system and cannot be used to create job submission scripts.

Set up application environment

FT and AT servers use "Modules" to set up environment. “Modules” makes it easier for users to run a standard or customized application and/or system environment. On AT and FT the HPCC uses classical TCL UNIX modules and LMOD - an advanced module system. The latter addresses the MODULEPATH hierarchical problem common in UNIX based "modules" implementation. Application packages can be loaded and unloaded cleanly through the module system using modulefiles. This includes easily adding or removing directories to the PATH environment variable. Modulefiles for Library packages provide environment variables that specify where the library and header files can be found. All the popular shells are supported: bash, ksh, csh, tcsh, zsh. LMOD is also available for perl and python. It is important to mention that LMOD can interpret TCL module files. The basic TCL module commands are listed below. Note that almost all applications have default version and several other versions. The default version is marked with (D). For example:

python/2.7.13_anaconda (D)

denotes Default version of Python which can be loaded without explicit specification of the version of the software:

module load python

Any other non default version of the same software can be loaded with specification of the full name of the module file.

module load python/3.7.6_anaconda

will load non-default 3.7.6 version of the Python. The module load command can be used to load several application environments at once:

module load package1 package2 ...

For documentation on “Modules”:

man module

For help enter:

module help

To see a list of currently loaded “Modules” run:

module list

To see a complete list of all modules available on the system run:

module avail

To show content of a module enter:

module show <module_name>

To change from one application to another ( example. default versions of gnu and intel compiler):

module swap gcc intel

To go back to an initial set of modules:

module reset

Using LMOD commands

To get a list of all modules available

module spider

To get information about a specific module

module spider python

Modules for the advanced user

A “Modules” example for advanced users who need to change their environment.

The HPC Center supports a number of different compilers, libraries, and utilities. In addition, at any given time different versions of the software may be installed. “Modules” is employed to define a default environment, which generally satisfies the needs of most users and eliminates the need for the user to create the environment. From time to time, a user may have a specific requirement that differs from the default environment.

In this example, the user wishes to use a version of the NETCDF library on the HPC Center’s Cray Xe6 (SALK) that is compiled with the Portland Group, Inc. (PGI) compiler instead of the installed default version, which was compiled with the Cray compiler. The approach to do this is:

- • Run module list to see what modules are loaded by default.

- • Determine what modules should be unloaded.

- • Determine what modules should be loaded.

- • Add the needed modules, i.e., module load

The first step, see what modules are loaded, is shown below.

user@arrow:~> module list Currently Loaded Modulefiles: 1) modules/3.2.6.6 2) nodestat/2.2-1.0400.31264.2.5.gem 3) sdb/1.0-1.0400.32124.7.19.gem 4) MySQL/5.0.64-1.0000.5053.22.1 5) lustre-cray_gem_s/1.8.6_2.6.32.45_0.3.2_1.0400.6453.5.1-1.0400.32127.1.90 6) udreg/2.3.1-1.0400.4264.3.1.gem 7) ugni/2.3-1.0400.4374.4.88.gem 8) gni-headers/2.1-1.0400.4351.3.1.gem 9) dmapp/3.2.1-1.0400.4255.2.159.gem 10) xpmem/0.1-2.0400.31280.3.1.gem 11) hss-llm/6.0.0 12) Base-opts/1.0.2-1.0400.31284.2.2.gem 13) xtpe-network-gemini 14) cce/8.0.7 15) acml/5.1.0 16) xt-libsci/11.1.00 17) pmi/3.0.0-1.0000.8661.28.2807.gem 18) rca/1.0.0-2.0400.31553.3.58.gem 19) xt-asyncpe/5.13 20) atp/1.5.1 21) PrgEnv-cray/4.0.46 22) xtpe-mc8 23) cray-mpich2/5.5.3 24) SLURM/11.3.0.121723

From the list, we see that the Cray Programming Environment (PrgEnv-cray/4.0.46) and the Cray Compiler environment are loaded (cce/8.0.7) by default. To unload these Cray modules and load in the PGI equivalents we need to know the names of the PGI modules. The module avail command shows this.

user@SALK:~> module avail • • •

We see that there are several versions of the PGI compilers and two versions of the PGI Programming Environment installed. For this example, we are interested in loading PGI's 12.10 release (not the default, which is pgi/12.6) and the most current release of the PGI programming environment (PrgEnv-pgi/4.0.46), which is the default.

The following module commands will unload the Cray defaults, load the PGI modules mentioned, and load version 4.2.0 of NETCDF compiled with the PGI compilers.

user@SALK:~> module unload PrgEnv-cray user@SALK:~> module load PrgEnv-pgi user@SALK:~> module unload pgi user@SALK:~> module load pgi/12.10 user@SALK:~> user@SALK:~> module load netcdf/4.2.0 user@SALK:~> user@SALK;~> cc -V /opt/cray/xt-asyncpe/5.13/bin/cc: INFO: Compiling with CRAYPE_COMPILE_TARGET=native. pgcc 12.10-0 64-bit target on x86-64 Linux Copyright 1989-2000, The Portland Group, Inc. All Rights Reserved. Copyright 2000-2012, STMicroelectronics, Inc. All Rights Reserved.

A few additional comments:

- • The first three commands do not include version numbers and will therefore load or unload the current default versions.

- • In the third line, we unload the default version of the PGI compiler (version 12.6), which is loaded with the rest of the PGI Programming Environment in the second line. We then load the non-default and more recent release from PGI, version 12.10 in the fourth line.

- • Later, we load NETCDF version 4.2.0 which, because we have already loaded the PGI Programming Environment, will load the version of NETCDF 4.2.0 compiled with the PGI compilers.

- • Finally, we check to see which compiler the Cray "cc" compiler wrapper actually invokes after this sequence of module commands by again entering module list.

Running jobs on Arrow and condo servers

Arrow server and condo servers are attached via 200 Gbps infiniband interconnect to a separate hybrid (NVMI + hard disks) fast file system called HPFFS which can provide speeds of 25-30GB/s write and 45-50 GB/s read. The /scratch and /global/u are part of the same HPFFS file system, but scratch is optimized for predominant access of the fast NVMI tier of HPFFS. The underlying files system manipulates the placement of the files to ensure the best possible performance for different file types. All jobs must start from /scratch/<userid> directory. Jobs cannot be started from user's home: /global/u/<userid> It is important to mention that data on /global/u on HPFFS file system are not backup-ed since this equipment is not integrated in HPCC infrastructure. Every user home directory has a quote of 100 GB. The latter can be expanded by submitting motivated request to HPCC.

Partitions and QOS access

Arrow server has one public partition and seven private partitions. The public partition is open for all core users of NSF grant (). The private partitions are restricted to owners of the resources. The access to each partition is controlled by Quality of service (QOS) function. Thus only users registered for a particular partition with matched QOS credentials will be allowed to run. Simply put, any job from unauthorized user will be rejected. Table below summarizes the information for partitions:

| Partition name | Type | Qos | Cores | GPU | GPU type | Users allowed | Time limits | Core limits | Memory limits | Jobs limits | Type of jobs |

|---|---|---|---|---|---|---|---|---|---|---|---|

| partnsf | public | qosnsf | 256/512 | 16 | A100/80 GB | all core users of NSF grant () | yes | 128 | 8G per cpucore | 30/user; 50 per group | Serial, OpenMP, MPI |

| partmath | private | qosmath | 192 | 2 | A40/48 GB | members of prof Kuklov and Prof Poje groups | yes | no | no | no | Serial, OpenMP, MPI |

| partcfd | private | high | 128 | 2 | A40/48 GB | members of prof. Poje group | no | no | no | no | Serial, OpenMP, MPI |

| partphys | private | high | 64 | 0 | NA | members of prof. Kuklov group | no | no | no | no | Serial, OpenMP, MPI |

| partchem | private | qoschem | 192 | 10 | A30/24GB + A100/40 GB | members of prof. Loverde group | no | no | no | no | Serial, OpenMP, MPI |

| partsym | private | qossmhigh | 64 | 2 | A100/40 GB | members of prof. Loverde group | no | no | no | no | Serial, OpenMP, MPI |

| partasrc | private | qosasrchigh | 64 | 2 | A30/24 GB | members of ASRC group | no | no | no | no | Serial, OpenMP, MPI |

| parteng | private | qoseng | 128 | 2 | A40/48 GB | members of prof. Vaishampayan group | no | no | no | no | Serial, OpenMP, MPI |

Copy files from/to Arrow and condo servers

Because Arrow and CT servers are connected only to HPFFS and are detached from main HPC infrastructure the user files can only be tunneled to Arrow with use of ssh tunneling mechanism. Users cannot use Globus online and/or Cea to transfer files between new and old file systems, nor they can use Cea and Globus Online to transfer files from their local devices to Arrow's file system. However the use of ssh tunneling offers an alternative way to securely transfer files to Arrow over the Internet using ssh protocol and Chizen as a ssh server. Users are encouraged to contact HPCC for further guidance. Here is example of tunneling via Chizen:

scp -J <user_id>@chizen.csi.cuny.edu <file_to_transfer> <user_id>@arrow:/scratch/<user_id/.

Users must submit their password twice for Chizen and for Arrow.

Files are tunneled through but not copied to chizen. Note that files copied to Chizen will be removed.

Set up execution environment on Arrow and CT servers

Overview of LMOD environment modules system

Each of the applications, libraries and executables requires specific environment. In addition many software packages and/or system packages exist in different versions. To ensure proper environment for each and every application, library or system software CUNY-HPCC applies the environment module system which allow quick and easy way to dynamically change user's environment through modules. Each module is a file which describes needed environment for the package.Modulefiles may be shared by all users on a system and users may have their own collection of module files. Note that on old servers (Penzias, Appel) HPCC utilizes TCL based modules management system which has less capabilities than LMOD. On Arrow HPCC uses only LMOD environment. management system. The latter is Lua based and has capabilities to resolve hierarchies. It is important to mentioned that LMOD system understands and accepts the TCL modules Thus user's module existing on Appel or Penzias can be transferred and used directly on Arrow. The LMOD also allows to use shortcuts. In addition users may create collections of modules and store the later under particular name. These collections can be used for "fast load" of needed modules or to supplement or replacement of the shared modulefiles. For instance ml can be used as replacement of command module load.

Modules categories

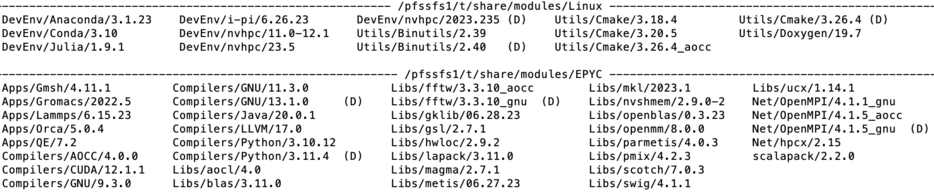

module category Library

Lmod modules are organized in categories. On Arrow the categories are Compilers, Libraries (Libs), Utilities(Util), Applications. Development Environments(DevEnv) and Communication (Net). To check content of each category the users may use the command module category <name of the category>. The picture above shows the output. In addition the version of the product is showed in module file name. Thus the line

Compilers/GNU/13.1.0

shown in EPYC directory denotes the module file for GNU (C/C++/fortran) compiler ver 13.1.0. tuned for AMD architecture.

List of available modules

To get list of available modules the users may use the command

module avail

The output of this command for Arrow server is shown.The (D) after the module's name denotes that this module is default. The (L) denotes that the module is already loaded.

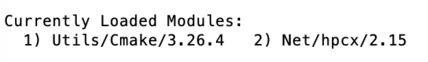

Load module(s) and check for loaded modules

Command module load <name of the module> OR module add<name of the module> loads a requested module. For example the below command load modules for utility cmake and network interface. User may check which modules are already loaded by typing module list. The figure below shows the output of this command

module load Utils/Cmake/3.26.4

module add Net/hpcx/2.15

module list

Another command which is equivalent to module load is module add as it is shown in above example.

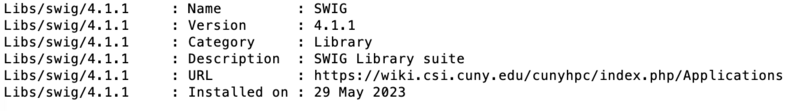

Module details

The information about module is available via whatis command for library swig:

module whatis Libs/swig

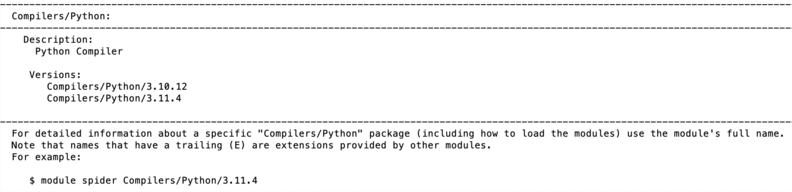

Searching for modules

Modules can be searched by module spider command. For instance the search of Python modules gives the following output:

module spider Python

t Each modulefile holds information needed to configure the shell environment for a specific software application, or to provide access to specific software tools and libraries. Modulefiles may be shared by all users on a system and users may have their own collection of module files. The users' collections may be used for "fast load" of needed modules or to supplement or replace the shared modulefiles.

Compiling user's developed codes on Arrow

Arrow login node is Intel X86_64 server with two K20m GPU. Codes can be compiled there and executable can run on AMD nodes, but with basic X86_64/AMD compatibility. For better results HPCC recommends to:

- compile codes directly on nodes where the codes will be run.

- use AMD optimized libraries such as ACML and AMD tuned compilers (AOCC). Users should read AOCC user manual for optimization options.

- the GNU compilers can be used as well but optimal performance on nodes is not guaranteed.

To compiler code directly on a node HPCC recommends the users to submit the batch job (alternative is to use interactive job - see below). Here is an example of compilation of parallel FORTRAN 77 code on a node member of particular partition.

#!/bin/bash

#SBATCH --nodes=1 # request for one node

#SBATCH --job_name=<job_name>

#SBATCH --partition=<partition where to compile> #one of the partitions when the user is registered

#SBATCH --qos=<qos for group e.g. qosmath>

#SBATCH --ntasks=1

#SBATCH --mem=64G

echo $SLURM_CPUS_PER_TASK

module purge

module load Compilers/AOCC/4.0.0. # load compiler

module load Net/OpenMPI/4.1.5_aocc # load OpenMPI library

mpif77 -o <executable> -O.. <source> #invokes compilation. Add appropriate optimization flags

Batch job submission system (SLURM)

This section below describes use of SLURM batch job submission system on Arrow. However many of examples can be also used on older servers like Penzias or Appel. Note that Penzais has outdated K20m GPU so pay attention and specify correctly the GPU type correctly in GPU constraints. SLURM is open source scheduler and batch system which is implemented at HPCC. SLURM is used on all servers to submit jobs.

SLURM script structure

A Slurm script must do three things:

- prescribe the resource requirements for the job

- set the environment

- specify the work to be carried out in the form of shell commands

The simple SLURM script is given below

#!/bin/bash

#SBATCH --job-name=test_job # some short name for a job

#SBATCH --nodes=1 # node count

#SBATCH --ntasks=1 # total number of tasks across all nodes

#SBATCH --cpus-per-task=1 # cpu-cores per task (>1 if multi-threaded tasks)

#SBATCH --mem-per-cpu=16 # memory per cpu-core

#SBATCH --time=00:10:00 # total run time limit (HH:MM:SS)

#SBATCH --mail-type=begin # send email when job begins

#SBATCH --mail-type=end # send email when job ends

#SBATCH --mail-user=<valid user email>

cd $SLURM_SUBMIT_DIR # change to directory from where jobs starts

The first line of a Slurm script above specifies the Linux/Unix shell to be used. This is followed by a series of #SBATCH directives which set the resource requirements and other parameters of the job. The script above requests 1 CPU-core and 4 GB of memory for 1 minute of run time. Note that #SBATCH is command to SLURM while the # not followed by SBATH is interpret as comment line. Users can submit 2 types of jobs - batch jobs and interactive jobs:

sbatch <name-of-slurm-script> submits job to the scheduler

salloc requests an interactive job on compute node(s) (see below)

Job(s) execution time

The job execution time is sum with them the job waits in SLURM partition (queue) before being executed on node(s) and actual running time on node. For the parallel corder the partition time (time job waits in partition) increases with increasing resources such as number of CPU-cores. On other hand the execution time (time on nodes) decreases with inverse of resources. Each job has its own "sweet spot" which minimizes the time to solution. Users are encouraged to run several test runs and to figure out what amount of asked resources works best for their job(s).

Working with QOS and partitions on Arrow

Every job submission script on Arrow must hold proper description of QOS and partition. For instance all jobs intended to use node n133 must have the following lines:

#SBATCH --qos=qoschem

#SBATCH --partition partchem

In similar way all jobs intended to use n130 and n131 must have in their job submission script:

#SBATCH --qos=qosnsf

#SBATCH --partition partnsf

Note that Penzias do not use QOS. Thus users must adapt scripts they copy from Penzias server to match QOS requirements on Arrow.

Submitting serial (sequential jobs)

These jobs utilize only a single CPU-core. Below is a sample Slurm script for a serial job in partition partchem. Users must add lines for QOS and partition as was explained above. :

#!/bin/bash

#SBATCH --job-name=serial_job # short name for job

#SBATCH --nodes=1 # node count always 1

#SBATCH --ntasks=1 # total number of tasks aways 1

#SBATCH --cpus-per-task=1 # cpu-cores per task (>1 if multi-threaded tasks)

#SBATCH --mem-per-cpu=8G # memory per cpu-core

#SBATCH --qos=qoschem

#SBATCH --partition partchem

cd $SLURM_SUBMIT_DIR

srun ./myjob

In above script requested resources are:

- --nodes=1 - specify one node

- --ntasks=1 - claim one task (by default 1 per CPU-core)

Job can be submitted for execution with command:

sbatch <name of the SLURM script>

For instance if the above script is saved in file named serial_j.sh the command will be:

sbatch serial_j.sh

Submitting multithread job

Some software like MATLAB or GROMACS are able to use multiple CPU-cores using shared-memory parallel programming models like OpenMP, pthreads or Intel Threading Building Blocks (TBB). OpenMP programs, for instance, run as multiple "threads" on a single node with each thread using one CPU-core. The example below show how run thread-parallel on Arrow. Users must add lines for QOS and partition as was explained above.

#!/bin/bash

#SBATCH --job-name=multithread # create a short name for your job

#SBATCH --nodes=1 # node count

#SBATCH --ntasks=1 # total number of tasks across all nodes

#SBATCH --cpus-per-task=4 # cpu-cores per task (>1 if multi-threaded tasks)

#SBATCH --mem-per-cpu=4G # memory per cpu-core

export OMP_NUM_THREADS=$SLURM_CPUS_PER_TASK

In this script the the cpus-per-task is mandatory so SLURM can run the multithreaded task using four CPU-cores. Correct choice of cpus-per-task is very important because typically the increase of this parameter decreases in execution time but increases waiting time in partition(queue). In addition these type of jobs rarely scale well beyond 16 cores. However the optimal value of cpus-per-task must be determined empirically by conducting several test runs. It is important to remember that the code must be 1. muttered code and 2. be compiled with multithread option for instance -fomp flag in GNU compiler.

Submitting distributed parallel job

These jobs use Message Passing Interface to realize the distributed-memory parallelism across several nodes. The script below demonstrates how to run MPI parallel job on Arrow. Users must add lines for QOS and partition as was explained above.

#!/bin/bash

#SBATCH --job-name=MPI_job # short name for job

#SBATCH --nodes=2 # node count

#SBATCH --ntasks-per-node=32 # number of tasks per node

#SBATCH --cpus-per-task=1 # cpu-cores per task (>1 if multi-threaded tasks)

#SBATCH --mem-per-cpu=16G # memory per cpu-core

cd $SLURM_SUBMIT_DIR

srun ./mycode <args>. # mycode is in local directory. For other places provider full path

The above script can be easily modified for hybrid (OpenMP+MPI) by changing the cpu-per-task parameter. The optimal value of --nodes and --ntasks for a given code must be determined empirically with several test runs. In order to run decrease communication the users shown try to run large jobs by taking the whole node rather than 2 chunks from 2 (or more nodes). In addition for large memory jonbs users must use --mem rather than mem-per-cpu. Below is an SLURM script example for submission of large memory MPI job with 128 cores on a single mode. Obviously is better this type of job to be run on single node rather than on two times 64 cores from 2 nodes. To achieve that users may use the following SLURM prototype script:

#!/bin/bash

#SBATCH --job-name MPI_J_2

#SBATCH --nodes 1

#SBATCH --ntasks 128 # total number of tasks

#SBATCH --mem 40G # total memory per job

#SBATCH --qos=qoschem

#SBATCH --partition partchem

cd $SLURM_SUBMIT_DIR

srun ...

In above script the requested resources are 128 cores on one node. Note that unused memory on this node will not be accessible to other jobs. In difference to previous script the memory is referred as total memory for a job via parameter --mem.

Submitting Hybrid (OMP+MPI) job on Arrow

#!/bin/bash

#SBATCH --job-name=OMP_MPI # name of the job

#SBATCH --ntasks=24 # total number of tasks aka total # of MPI processes

#SBATCH --nodes=2 # total number of nodes

#SBATCH --tasks-per-node=12 # number of tasks per node

#SBATCH --cpus-per-task=2 # number of OMP threads per MPI process

#SBATCH --mem-per-cpu=16G # memory per cpu-core

#SBATCH --partition=partnsf

#SBATCH --qos=qosnsf

cd $SLURM_SUBMIT_DIR

export OMP_NUM_THREADS=$SLURM_CPUS_PER_TASK

export SRUN_CPUS_PER_TASK=$SLURM_CPUS_PER_TASK

srun ...

The above script si prototype and shows how to allocate 24 MPI treads with 12 cores per node. Each MPI tread initiates 2 OMP threads. For actual working script users must add QOS and partition information and adjust their requirements for the memory.

GPU jobs

On arrow each of the nodes has 8 GPU A40 with 80GB on board. To use GPUs in a job users must add the --gres option in SBATH line for cpu resources. The example below demonstrates a GLU enabled SLURM script. Users must add lines for QOS and partition as was explained above.

#!/bin/bash

#SBATCH --job-name=GPU_J # short name for job

#SBATCH --nodes=1 # number of nodes

#SBATCH --ntasks=1 # total number of tasks across all nodes

#SBATCH --cpus-per-task=1 # cpu-cores per task (>1 if multi-threaded tasks)

#SBATCH --mem-per-cpu=16G # memory per cpu-core

#SBATCH --gres=gpu:1 # number of gpus per node max 8 for Arrow

cd $SLURM_SUBMIT_DIR

srun ... <code> <args>

GPU constrains

On Appel the nodes in partnsf, partchem and partmath have different GPU types (A30, A40 and A100). The type of GPU can be specified in SLURM by use constraint on the GPU SKU, GPU generation, or GPU compute capability. Here is example:

#SBATCH --gres=gpu:1 --constraint='gpu_sku:V100' # allocates one V100 GPU

#SBATCH --gres=gpu:1 --constraint='gpu_gen:Ampere' # allocates one Ampere GPU (A40 or A100)

#SBATCH --gres=gpu:1 --constraint='gpu_cc:12.0' # allocates GPU per computability (generation)

#SBATCH --gres=gpu:1 --constraint='gpu_mem:32GB' # allocates GPU with 32GB memory on board

#SBATCH --gres=gpu:1 --constraint='nvlink:2.0'. # allocates GPU linked with NVLink

Parametric jobs via Job Array

Job arrays are used for running the same job multiple times but with only slightly different parameters. The below script demonstrates how to run such a job on Arrow. Users must add lines for QOS and partition as was explained above. NB! The array numbers must be less than the maximum number of jobs allowed in the array.

#!/bin/bash

#SBATCH --job-name=Array_J # short name for job

#SBATCH --nodes=1 # node count

#SBATCH --ntasks=1 # total number of tasks across all nodes

#SBATCH --cpus-per-task=1 # cpu-cores per task (>1 if multi-threaded tasks)

#SBATCH --mem-per-cpu=16G # memory per cpu-core

#SBATCH --output=slurm-%A.%a.out # stdout file (standart out)

#SBATCH --error=slurm-%A.%a.err # stderr file (standart error)

#SBATCH --array=0-3 # job array indexes 0, 1, 2, 3

cd $SLURM_SUBMIT_DIR

<executable>

Interactive jobs

These jobs are useful in development or test phase and rarely are required in a workflow. It is not recommend to use interactive jobs as main type of jiobs since they consume more resources that regular batch jobs. To set up interactive job the users first have to 1. start interactive shell and 2 "reserve" the resources. teh example below describes that.

srun -p interactive --pty /bin/bash # starts interactive session

Once the interactive session is running the users must "reserve" resources needed for actual job:

salloc --ntasks=8 --ntasks-per-node=1 --cpus-per-task=2. # allocates resources

salloc: CPU resource required, checking settings/requirements...

salloc: Granted job allocation ....

salloc: Waiting for resource configuration

salloc: Nodes ... # system reports back where the resources were allocated