Running Jobs

Running jobs on any HPCC server

Overview

Running jobs on any HPCC production server require 2 steps, On first step the users must prepare:

- Input data file(s) for the job.

- Parameter(s) file(s) for the job (if applicable, could be in subdirectory as well with explicit path);

The files must be placed in users /scratch/<userid> or its subdirectory. Jobs cannot start from home directories /global/u/<user_id>.

On step two the users must do:

- Set up execution environment by loading proper module(s);

- Write the correct job submission script which holds computational parameters of the job (i.e. needed # of cores, amount of memory, run time etc.).

The job submission script must also be placed in /scratch/<userid>

File systems on Penzias, Appel and Karle

These servers use 2 separate file systems /global/u and scratch. Scratch is fast small file system mounted on all nodes. /global/u is large but slower file system which holds all users' home directories (/global/u/<userid>) and is mounted only on login node for these servers. All jobs must start from scratch file system. Jobs cannot be submitted from main file system.

Create and transfer of input/output data and parameters files

The input data and parameter(s) files can be locally generated or directly transferred to /scratch/<userid> using file transfer node (cea) or GlobusOnline. In both cases the HPCC recommends a transfer to user's home directory first to /global/u/<userid> before copy the needed files from user's home to /scratch/<userid> . In addition, these files can be transferred from users' local storage (i.e. local laptop) to DSMS (/global/u/<userid> ) using cea and/or Globus. The submission script must be created with use of Unix/Linux text editor only such as Vi/Vim, Edit, Pico or Nano. MS Windows Word is a word processing system and cannot be used to create the job submission scripts.

File systems on Arrow

Arrow is attached to NSF funded 2 PB global hybrid file system. The latter holds both users' home directories (/global/u/<userid> ) and users' scratch directories (/scratch/<userid>). The underlying files system manipulates the placement of the files to ensure the best possible performance for different file types. It is important to remember that only scratch directories are visible on nodes. Consequently jobs can be submitted only from /scratch/<userid>. directory. Users must preserve valuable files (data, executables, parameters etc) in /global/u/<userid>.

Spawn and transfer of input/output data and parameters files

The Arrow file system is not yet integrated with HPCC file transferring infrastructure and thus the users cannot use GlobusOnline or file transfer node as it described above. The users can only create parameter(s) files and job submission scripts directly in /scratch/<userid> . Input data and other large files must be tunneled to Arrow. users are encouraged to contact HPCC for actual procedure.

Copy or move files between Arrow and other servers

Because Arrow is detached from main HPC infrastructure the files can be only tunneled between these servers with use of secure copy or sftp. Users cannot use Globus online and/or cea to transfer files between new and old file systems, nor can use cea and Globus to transfer files from their local devices to Arrow's file system. The model of ssh tunneling is allows to transfer files to Arrow over the Internet to Arrow using ssh protocol and Chizen as ssh server.

Set up execution environment

Overview of LMOD environment modules system

Each of the applications, libraries and executables requires specific environment. In addition many software packages and/or system packages exist in different versions. To ensure proper environment for each and every application, library or system software CUNY-HPCC applies the environment module system which allow quick and easy way to dynamically change user's environment through modules. Each module is a file which describes needed environment for the package.Modulefiles may be shared by all users on a system and users may have their own collection of module files. Note that on old servers (Penzias, Appel) HPCC utilizes TCL based modules management system which has less capabilities than LMOD. On Arrow HPCC uses only LMOD environment. management system. The latter is Lua based and has capabilities to resolve hierarchies. It is important to mentioned that LMOD system understands and accepts the TCL modules Thus user's module existing on Appel or Penzias can be transferred and used directly on Arrow. The LMOD also allows to use shortcuts. In addition users may create collections of modules and store the later under particular name. These collections can be used for "fast load" of needed modules or to supplement or replacement of the shared modulefiles. For instance ml can be used as replacement of command module load.

Modules categories

module category Library

Lmod modules are organized in categories. On Arrow the categories are Compilers, Libraries (Libs), Utilities(Util), Applications. Development Environments(DevEnv) and Communication (Net). To check content of each category the users may use the command module category <name of the category>. Thus the line

Compilers/GNU/13.1.0

from EPYC directory denotes the GNU (C/C++/fortran) compiler ver 13.1.0. tuned for AMD architecture.

List of available modules

To get list of available modules the users may use the command

module avail

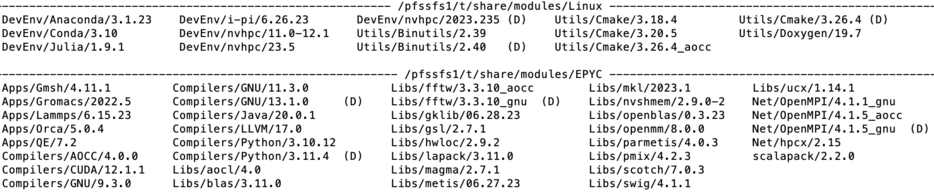

The output of this command for Arrow server is shown.The (D) after the module's name denotes that this module is default. The (L) denotes that the module is already loaded.

Load module(s) and check for loaded modules

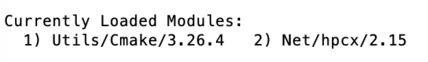

Command module load <name of the module> OR module add<name of the module> loads a requested module. For example the below command load modules for utility cmake and network interface. User may check which modules are already loaded by typing module list. The figure below shows the output of this command

module load Utils/Cmake/3.26.4

module add Net/hpcx/2.15

module list

Another command which is equivalent to module load is module add as it is shown in above example.

Module details

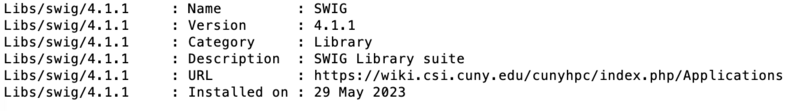

The information about module is available via whatis command for library swig:

module whatis Libs/swig

Searching for modules

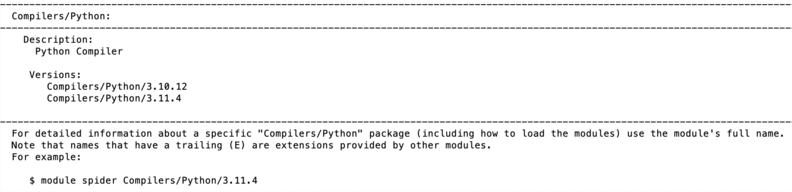

Modules can be searched by module spider command. For instance the search of Python modules gives the following output:

module spider Python

t Each modulefile holds information needed to configure the shell environment for a specific software application, or to provide access to specific software tools and libraries. Modulefiles may be shared by all users on a system and users may have their own collection of module files. The users' collections may be used for "fast load" of needed modules or to supplement or replace the shared modulefiles.

Batch job submission system

SLURM is open source scheduler and batch system which is implemented at HPCC. SLURM is used on all servers to submit jobs.

SLURM script structure

A Slurm script must do three things:

- prescribe the resource requirements for the job

- set the environment

- specify the work to be carried out in the form of shell commands

The simple SLURM script is given below

#!/bin/bash

#SBATCH --job-name=test_job # some short name for a job

#SBATCH --nodes=1 # node count

#SBATCH --ntasks=1 # total number of tasks across all nodes

#SBATCH --cpus-per-task=1 # cpu-cores per task (>1 if multi-threaded tasks)

#SBATCH --mem-per-cpu=16 # memory per cpu-core

#SBATCH --time=00:10:00 # total run time limit (HH:MM:SS)

#SBATCH --mail-type=begin # send email when job begins

#SBATCH --mail-type=end # send email when job ends

#SBATCH --mail-user=<valid user email>

The first line of a Slurm script above specifies the Linux/Unix shell to be used. This is followed by a series of #SBATCH directives which set the resource requirements and other parameters of the job. The script above requests 1 CPU-core and 4 GB of memory for 1 minute of run time. Note that #SBATCH is command to SLURM while the # not followed by SBATH is interpret as comment line. Users can submit 2 types of jobs - batch jobs and interactive jobs:

sbatch <name-of-slurm-script> submits job to the scheduler

salloc requests an interactive job on compute node(s) (see below)

Job(s) execution time

The job execution time is sum with them the job waits in SLURM partition (queue) before being executed on node(s) and actual running time on node. For the parallel corder the partition time (time job waits in partition) increases with increasing resources such as number of CPU-cores. On other hand the execution time (time on nodes) decreases with inverse of resources. Each job has its own "sweet spot" which minimizes the time to solution. Users are encouraged to run several test runs and to figure out what amount of asked resources works best for their job(s).

Partitions and quality of service (QOS)

In SLURM terminology partition has a meaning of a queue. Jobs are placed in partition for execution. QOS mechanism allows control of the resources. In particular applied to partition the QOS allows to create a 'floating' partition. Floating partition gets all nodes resources but will allows to run on the number of resources in it. QOS is used on all partitions so to allow different group of users to have access to their dedicated resources. HPCC use QOS to ensure that only core members of the NSF grant have access to purchased resources. The partitions with QOS on Arrow are:

| Partition | Nodes | Allowed Users | Partition limitations |

|---|---|---|---|

| partnsf | n130,n131 | All registered core participants of NSF grant | 128cores/240h per job |

| partchem | n133 | All registered users from Prof. S.Loverde Group | no limits |

| partmath | n136,n137 | All registered users from Prof. A.Poje and A.Kuklov | no limits |

Note that users can submit job only to partition they are registered to e.g. jobs from users registered to partnsf will be rejected on other partitions (and vice versa).

Working with QOS and partitions on Arrow

Every job submission script on Arrow must hold proper description of QOS and partition. For instance all jobs intended to use n133 must have the following lines:

#SBATCH --qos=qoschem

#SBATCH --partition partchem

In similar way all jobs intended to use n130 and n131 must have in their job submission script:

#SBATCH --qos=qosnsf

#SBATCH --partition partnsf

Note that Penzias do not use QOS. Thus users must adapt scripts they copy from Penzias server to match QOS requirements on Arrow.

all users are assigned in a single pool and use a single partition called production. On Arrow the users are assigned to particular resources (nodes) and

SLURM commands:

Slurm commands resemble the commands used in Portable Batch System (SLURM). The below table compares the most common SLURM and SLURM Pro commands.

A few examples follow:

If the files are in /global/u

cd /scratch/<userid> mkdir <job_name> && cd <job_name> cp /global/u/<userid>/<myTask/a.out ./ cp /global/u/<userid>/<myTask/<mydatafile> ./

If the files are in SR (cunyZone):

cd /scratch/<userid> mkdir <job_name> && cd <job_name> iget myTask/a.out ./ iget myTask/<mydatafile> ./

Set up job environment

Users must load the proper environment before start any job. The loaded environment wil be automatically exported to compute nodes at the time of execution. Users must use modules to load environment. For example to load environment for default version of GROMACS one must type:

module load gromacs

The list of available modules can be seen with command

module avail

The list of loaded modules can be seen with command

module list

More information about modules is provided in "Modules and available third party software" section below.

Running jobs on HPC systems running SLURM scheduler

To be able to schedule your job for execution and to actually run your job on one or more compute nodes, SLURM needs to be instructed about your job’s parameters. These instructions are typically stored in a “job submit script”. In this section, we describe the information that needs to be included in a job submit script. The submit script typically includes

- • job name

- • queue name

- • what compute resources (number of nodes, number of cores and the amount of memory, the amount of local scratch disk storage (applies to Andy, Herbert, and Penzias), and the number of GPUs) or other resources a job will need

- • packing option

- • actual commands that need to be executed (binary that needs to be run, input\output redirection, etc.).

A pro forma job submit script is provided below.

#!/bin/bash #SBATCH --partition <queue_name> #SBATCH -J <job_name> #SBATCH --mem <????> # change to the working directory cd $SLURM_WORKDIR echo ">>>> Begin <job_name>" # actual binary (with IO redirections) and required input # parameters is called in the next line mpirun -np <cpus> <Program Name> <input_text_file> > <output_file_name> 2>&1

Note: The #SLURM string must precede every SLURM parameter.

# symbol in the beginning of any other line designates a comment line which is ignored by SLURM

Explanation of SLURM attributes and parameters:

- --partition <queue_name> Available main queue is “production” unless otherwise instructed.

- • “production” is the normal queue for processing your work on Penzias.

- • “development” is used when you are testing an application. Jobs submitted to this queue can not request more than 8 cores or use more than 1 hour of total CPU time. If the job exceeds these parameters, it will be automatically killed. “Development” queue has higher priority and thus jobs in this queue have shorter wait time.

- • “interactive” is used for quick interactive tests. Jobs submitted into this queue run in an interactive terminal session on one of the compute nodes. They can not use more than 4 cores or use more than a total of 15 minutes of compute time.

- -J <job_name> The user must assign a name to each job they run. Names can be up to 15 alphanumeric characters in length.

- --ntasks=<cpus> The number of cpus (or cores) that the user wants to use.

- • Note: SLURM refers to “cores” as “cpus”; currently HPCC clusters maps one thread per one core.

- --mem <mem> This parameter is required. It specifies how much memory is needed per job.

- --gres <gpu:2> The number of graphics processing units that the user wants to use on a node (This parameter is only available on PENZIAS).

gpu:2 denotes requesting 2 GPU's.

Special note for MPI users

Parameters are defined can significantly affect the run time of a job. For example, assume you need to run a job that requires 64 cores. This can be scheduled in a number of different ways. For example,

#SBATCH --nodes 8 #SBATCH --ntasks 64

will freely place the 8 job chunks on any nodes that have 8 cpus available. While this may minimize communications overhead in your MPI job, SLURM will not schedule this job until 8 nodes each with 8 free cpus becomes available. Consequently, the job may wait longer in the input queue before going into execution.

#SBATCH --nodes 32 #SBATCH --ntasks 2

will freely place 32 chunks of 2 cores each. There will possibly be some nodes with 4 free chunks (and 8 cores) and there may be nodes with only 1 free chunk (and 2 cores). In this case, the job ends up being more sparsely distributed across the system and hence the total averaged latency may be larger then in case with nodes 8, ntasks 64

mpirun -np <total tasks or total cpus>. This script line is only to be used for MPI jobs and defines the total number of cores required for the parallel MPI job.

The Table 2 below shows the maximum values of the various SLURM parameters by system. Request only the resources you need as requesting maximal resources will delay your job.

Serial Jobs

For serial jobs, --nodes 1 and --ntasks 1 should be used.

#!/bin/bash # # Typical job script to run a serial job in the production queue # #SBATCH --partition production #SBATCH -J <job_name> #SBATCH --nodes 1 #SBATCH --ntasks 1 # Change to working directory cd $SLURM_SUBMIT_DIR # Run my serial job </path/to/your_binary> > <my_output> 2>&1

OpenMP and Threaded Parallel jobs

OpenMP jobs can only run on a single virtual node. Therefore, for OpenMP jobs, place=pack and select=1 should be used; ncpus should be set to [2, 3, 4,… n] where <n must be less than or equal to the number of cores on a virtual compute node.

Typically, OpenMP jobs will use the <mem> parameter and may request up to all the available memory on a node.

#!/bin/bash

#SBATCH -J Job_name

#SBATCH --partition production

#SBATCH --ntasks 1

#SBATCH --nodes 1

#SBATCH --mem=<mem>

#SBATCH -c 4

# Set OMP_NUM_THREADS to the same value as -c

# with a fallback in case it isn't set.

# SLURM_CPUS_PER_TASK is set to the value of -c, but only if -c is explicitly set

omp_threads=1

if [ -n "$SLURM_CPUS_PER_TASK" ];

omp_threads=$SLURM_CPUS_PER_TASK

else

omp_threads=1

fi

mpirun -np </path/to/your_binary> > <my_output> 2>&1

mpirun -np 16 </path/to/your_binary> > <my_output> 2>&1

MPI Distributed Memory Parallel Jobs

For an MPI job, select= and ncpus= can be one or more, with np= >/=1.

#!/bin/bash # # Typical job script to run a distributed memory MPI job in the production queue requesting 16 cores in 16 nodes. # #SBATCH --partition production #SBATCH -J <job_name> #SBATCH --ntasks 16 #SBATCH --nodes 16 #SBATCH --mem=<mem>

# Change to working directory cd $SLURM_SUBMIT_DIR # Run my 16-core MPI job mpirun -np 16 </path/to/your_binary> > <my_output> 2>&1

GPU-Accelerated Data Parallel Jobs

#!/bin/bash # # Typical job script to run a 1 CPU, 1 GPU batch job in the production queue # #SBATCH --partition production #SBATCH -J <job_name> #SBATCH --ntasks l #SBATCH --gres gpu:1 #SBATCH --mem <fond color="red"><mem></fond color>

# Find out which compute node the job is using hostname # Change to working directory cd $SLURM_SUBMIT_DIR # Run my GPU job on a single node using 1 CPU and 1 GPU. </path/to/your_binary> > <my_output> 2>&1

Submitting jobs for execution

NOTE: We do not allow users to run any production job on the login-node. It is acceptable to do short compiles on the login node, but all other jobs must be run by handing off the “job submit script” to SLURM running on the head-node. SLURM will then allocate resources on the compute-nodes for execution of the job.

The command to submit your “job submit script” (<job.script>) is:

sbatch <job.script>

This section in in development

Saving output files and clean-up

Normally you expect certain data in the output files as a result of a job. There are a number of things that you may want to do with these files:

- • Check the content of these outputs and discard them. In such case you can simply delete all unwanted data with rm command.

- • Move output files to your local workstation. You can use scp for small amounts of data and/or GlobusOnline for larger data transfers.

- • You may also want to store the outputs at the HPCC resources. In this case you can either move your outputs to /global/u or to SR1 storage resource.

In all cases your /scratch/<userid> directory is expected to be empty. Output files stored inside /scratch/<userid> can be purged at any moment (except for files that are currently being used in active jobs) located under the /scratch/<userid>/<job_name> directory.